How technology can create and combat synthetic media

What are Deep Fakes?

Deep Fakes are a type of synthetic media that uses artificial intelligence (AI) to manipulate or generate audio, video, or images. They can create realistic-looking content that appears to show people doing or saying things that they never did or said. For example, a Deep Fake video could show a politician making a controversial statement, a celebrity endorsing a product, or a person’s face swapped with another person’s face.

Below are examples of one with an Arnold Schwarzenegger Deep Fake starring in James Cameron’s Titanic

How do Deep Fakes work?

Deep Fakes are created by using deep learning, a branch of AI that involves training neural networks on large amounts of data. Neural networks are mathematical models that can learn patterns and features from the data and apply them to new inputs. There are different methods to create Deep Fakes, but one of the most common ones is called generative adversarial networks (GANs).

GANs consist of two neural networks: a generator and a discriminator. The generator tries to create fake content that looks like real content, while the discriminator tries to distinguish between the real and the fake content. The two networks compete, improving their skills over time. The result is fake content that can fool both humans and machines.

What are the threats of Deep Fakes?

Deep Fakes pose several threats to individuals, organizations, and society. Some of the potential harms of Deep Fakes are:

- Disinformation and propaganda: Deep Fakes can be used to spread false or misleading information, influence public opinion, undermine trust in institutions, and incite violence or conflict.

- Identity theft and fraud: Deep Fakes can be used to impersonate someone’s voice, face, or biometric data, and gain access to their personal or financial information, accounts, or devices.

- Blackmail and extortion: Deep Fakes can be used to create compromising or embarrassing content that can be used to coerce or threaten someone.

- Privacy and consent violation: Deep Fakes can be used to create non-consensual or invasive content that can harm someone’s reputation, dignity, or mental health.

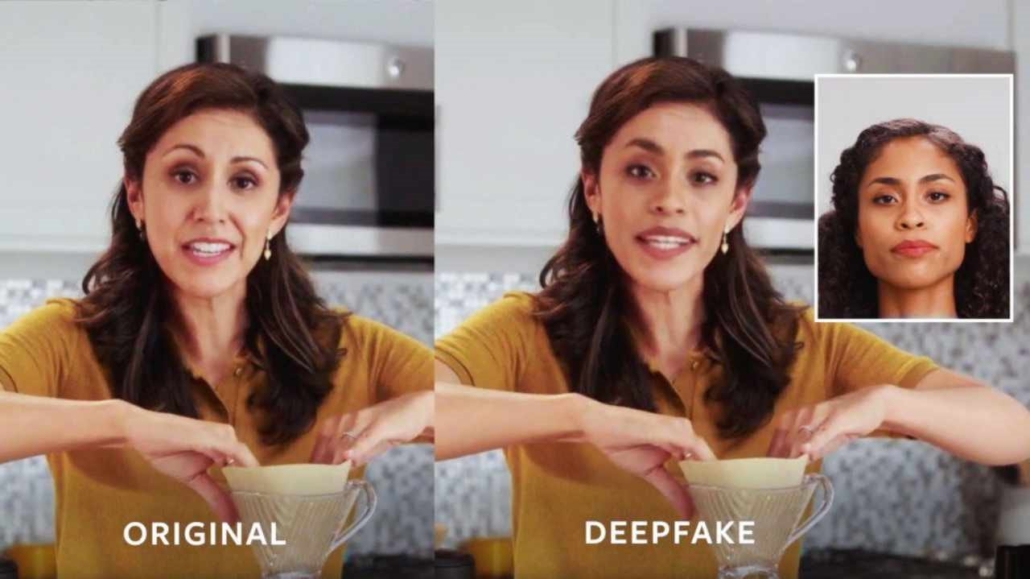

An example of how close a Deep Fake can be to the original

The people below were generated by the website www.thispersondoesnotexist.com such images can be used in fake social media accounts.

How are companies dealing with Deep Fakes?

While Deep Fakes pose a serious challenge, they also offer an opportunity for innovation and collaboration. Many companies are developing tools and solutions to detect, prevent, and mitigate the impact of Deep Fakes. Some of the examples are:

- Adobe: Adobe has created a tool called Content Authenticity Initiative (CAI) that aims to provide a secure and verifiable way to attribute the origin and history of digital content. CAI uses cryptography and blockchain to create a tamper-proof record of who created, edited, or shared the content and allows users to verify the authenticity and integrity of the content.

- Meta: Meta, formerly known as Facebook, has launched a program called Deep Fake Detection Challenge (DFDC) that aims to accelerate the development of Deep Fake detection technologies. DFDC is a global competition that invites researchers and developers to create and test algorithms that can detect Deep Fakes in videos. DFDC also provides a large and diverse dataset of real and fake videos for training and testing purposes.

- Microsoft: Microsoft has developed a tool called Video Authenticator that can analyze videos and images and provide a confidence score of how likely they are to be manipulated. Video Authenticator uses a machine learning model that is trained on a large dataset of real and fake videos, and can detect subtle cues such as fading, blurring, or inconsistent lighting that indicate manipulation. Microsoft also provides a browser extension that can apply the same technology to online content.

- X (formerly known as Twitter): X/Twitter has implemented a policy that requires users to label synthetic or manipulated media that are shared on its platform. The policy also states that X/Twitter may remove or flag such media if they are likely to cause harm or confusion. Twitter uses a combination of human review and automated systems to enforce the policy and provide context and warnings to users.

- Deeptrace: Deeptrace is a startup that specializes in detecting and analyzing Deep Fakes and other forms of synthetic media. Deeptrace offers a range of products and services, such as Deeptrace API, Deeptrace Dashboard, and Deeptrace Intelligence, that can help clients identify, monitor, and respond to malicious or harmful uses of Deep Fakes. Deeptrace also publishes reports and insights on the trends and developments of synthetic media.

These are just some of the examples of how companies are tackling the problem of Deep Fakes. There are also other initiatives and collaborations from academia, government, civil society, and media that are working to raise awareness, educate users, and promote ethical and responsible use of synthetic media.